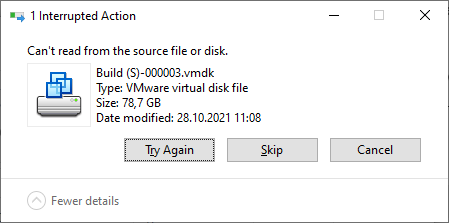

I needed to copy some huge files, used by VMware Workstation, to a new machine. I used the Windows Explorer and simply dragged the folder to the new destination. However, after a few minutes the copy aborted with following error:

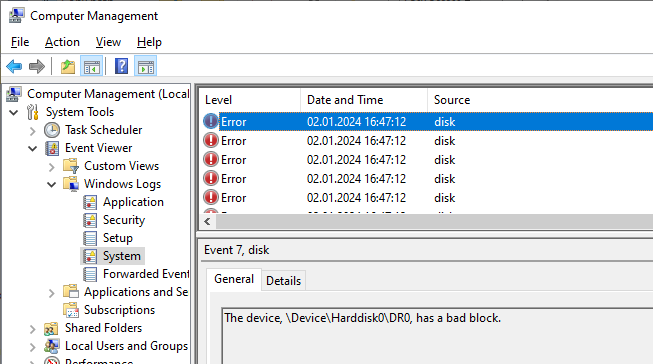

I launched the Computer Management console and opened Windows Logs/System under the Event Viewer node. Looking at the event log confirmed that my disk has some bad blocks:

I confirmed this by running chkdsk /scan /r C:, however, after rebooting and

attempting a repair, the error was still preventing me from copying the huge file.

Force Copy to the rescue

Looking for a solution, I came across an old post from Davor Josipovic (June 2013). Davor had written a PowerShell script which tries to copy a file, skipping the bad blocks and replacing them with zeroes if multiple read attempts don’t succeed.

I downloaded the Force-Copy.ps1

file and copied it to my C:\Tools folder. As explained by Davor,

trying to execute it directly would fail with following message:

1C:\Tools\Force-Copy.ps1 : File C:\Tools\Force-Copy.ps1 cannot be loaded.

2The file C:\Tools\Force-Copy.ps1 is not digitally signed. You cannot

3run this script on the current system. For more information about running

4scripts and setting execution policy, see about_Execution_Policies at

5https:/go.microsoft.com/fwlink/?LinkID=135170.

However, rather than changing the execution policy globally, I prefer to unblock just that file:

1Unblock-File -Path C:\Tools\Force-Copy.ps1

Copying the damaged file

Now that I unblocked the file without changing the execution policy of my system, I can run the script to copy the damaged file:

1C:\Tools\Force-Copy.ps1 \

2 -SourceFilePath "Build (S)-000003.vmdk" \

3 -DestinationFilePath "D:\VMs\Build (S)-000003.vmdk"

I tried using the

-BufferSize 33554432 -BufferGranularSize 4096 arguments to

speed up the whole operation by a factor of 5, however the copy

did not work as advertised and produced a hole of 32MB in the file, rather than discarding just 4096 bytes.

The

-BufferSize 33554432argument tells the script to attempt to read chunks of 32MB each, and-BufferGranularSize 4096ensures that the reads will be done with the minimal cluster size once when an error is encountered. This makes the whole process much faster.